JoinARGS - AI Alignment

Tech profiles such as Elon Musk and Geoffrey Hinton have voiced their concern about risks from unaligned AI. Join a talk by Simon Koser on AI alignment, the research field that attempts to ensure AI systems align with human values. The talk will start with an introduction to alignment, exploring the existing ML paradigm and why it might fail.

Learn about possible pitfalls like reward hacking, misgeneralization, reward misspecification, latent knowledge, and instrumental convergence. We'll delve into examples of how human evaluators' intuition can be tricked by algorithms, leading to ineffective feedback.

Discover potential solution agendas such as interpretability, RLHF/RLAIF, "Constitutional AI", adversarial techniques and more. We'll do a deep dive case study on ELK, one approach for AI alignment and see where it fails.

We'll also address other vital considerations: the feasibility of an AI pause, China's role in AI development, computational overhang, and the importance of incentives. Gain actionable advice on AI alignment, and a better grasp of the key research organisations in the field.

This talk provides a good stepping stone for anyone keen on replicating papers, engaging in AI alignment research, and working in the field. Get up to speed on the main alignment research areas and take a step towards safer AI. Lunch will be served to the first 30 people that arrive.

📝 Registration

Sorry, the event has already taken place!

Ping us at contact@kthais.com if you need help.

Time: May 24, 2023 12:00 - 13:00

📣 Speaker

First year Industrial Engineering student at KTH interested in artificial intelligence and recent developments. Open Philanthropy future studies grant recipient and now founding args[] at KTH. He has also participated in an AI safety workshop in SF where he met top researchers in the field.

Room: Torget

Location: KTH Innovation, Stockholm, Sweden

Become a member

Join the KTH AI Society and gain access to Slack ![]() where you can communicate with others interested in the same field as you and get a quick insight on the organization!

where you can communicate with others interested in the same field as you and get a quick insight on the organization!

Latest news

The Intersection of AI and the Human Brain

A longstanding aspiration of researchers has been to create Artificial...

Navigating the New Energy Era

By Yuhui Gan Artificial Intelligence (AI) technologies are revolutionizing the...

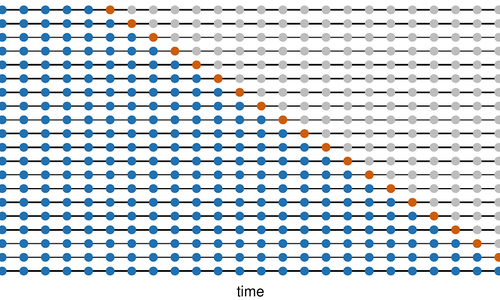

An Introduction to Time Series Forecasting

What is a time series? Many of the real world...